Nine years on top: HERE continues to set the pace in location technology

Louis Boroditsky — 27 May 2026

6 min read

08 May 2026

In our previous article, we explored why large language models (LLMs) often struggle with spatial queries.

While they can describe places and extract spatial hints from text, they frequently fail when asked to compute routes, distances or real-world constraints. The reason is structural: language models are designed to predict text, while spatial reasoning requires deterministic computation over geographic structures.

Once this limitation is understood, a natural question follows: how can LLMs be enabled to reason correctly about the physical world?

Two broad approaches are emerging.

One approach focuses on improving the model itself. The idea is that if spatial knowledge is embedded deeply enough into the model’s representation of the world, it may become capable of reasoning more reliably about geographic relationships.

This has led to a growing interest in techniques such as:

geographic ontologies and taxonomies

knowledge graphs connecting places, roads and attributes

vector embeddings of geospatial datasets

specialized “GeoLLM” models trained on map data

The goal of these methods is to translate geographic knowledge into formats that language models can learn during training. By exposing models to structured relationships between places, roads and features, researchers have demonstrated improvements in the model’s ability to infer spatial relationships from language.

This approach has shown genuine results in several areas. It can improve how models interpret geographic concepts, help them retrieve relevant locations from large datasets and strengthen their ability to recognize and reason about place-related text.

However, enriching a model with geographic information does not fundamentally change the nature of the model itself. It is worth noting that emergent spatial reasoning does improve meaningfully with scale and curated training data—the limitation here is structural, not total. Even when trained on spatial data, a language model still predicts tokens based on probability. It does not compute routes across a road graph, evaluate traffic flows in real time or enforce geometric constraints between coordinates.

For tasks involving interpretation, classification or retrieval of geographic information, the model may become better at describing geography, while still relying on approximations when asked to compute it.

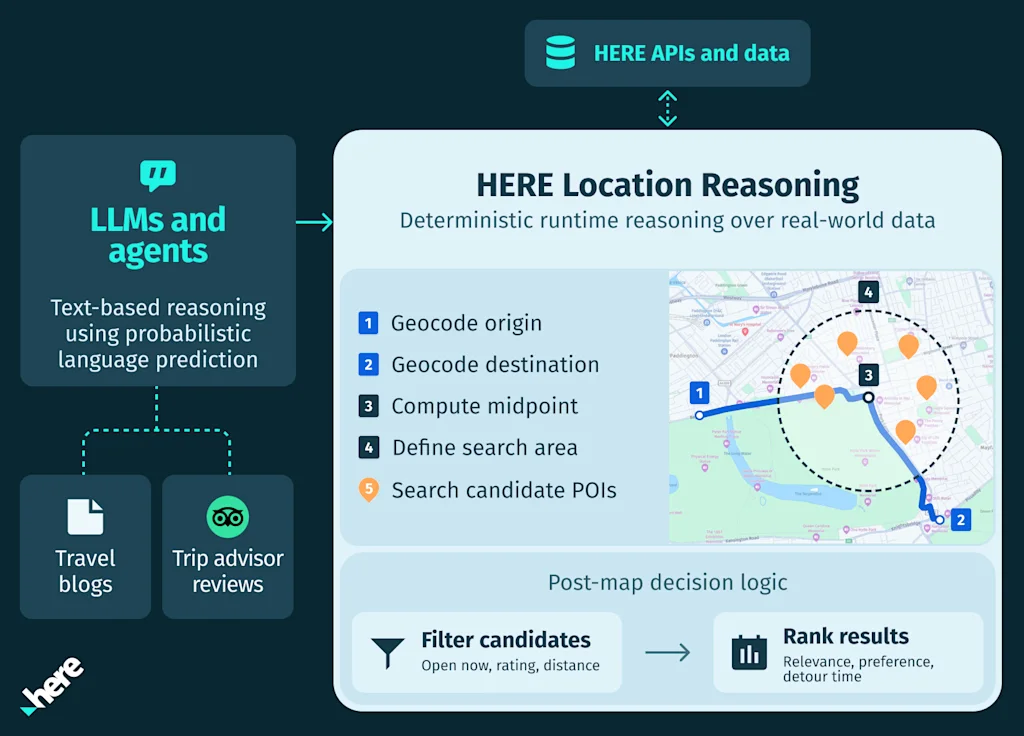

A second approach treats spatial reasoning differently. Instead of attempting to teach the language model to perform geographic computation internally, the model is used to interpret the user’s request and orchestrate specialized systems that execute the spatial logic directly.

In this architecture, the language model focuses on what it does best: understanding user understanding and intent interpretation, and translating natural language into structured actions.

The actual spatial reasoning—routing, distance calculation, midpoint computation, traffic analysis and location search—is performed by deterministic geospatial engines operating on real-world map data.

For example, a query such as:

“Find a coffee stop near an EV charging station halfway along my route from Bordeaux to Montpellier.”

requires several spatial steps:

identifying the origin and destination

computing the route across the road network

determining the midpoint of that route searching for candidate places near that midpoint evaluating travel time and arrival constraints

These steps involve calculations across road graphs, geographic coordinates and real-time signals such as real-time traffic and transit data. Instead of attempting to infer these relationships from text, the system performs them directly using spatial computation.

The language model acts as a planner and coordinator, translating natural language into the sequence of operations required to solve the problem.

It is worth acknowledging that this approach carries its own constraints: runtime orchestration introduces latency, creates dependency on external APIs and adds potential failure points if those services are unavailable or return errors. Robust systems need to account for these trade-offs.

Location AI is situational awareness, not static knowledge.

Both approaches contribute to the broader evolution of artificial intelligence (AI) systems.

Enriching models with geographic knowledge can improve how they interpret spatial language and retrieve relevant information. At the same time, executing spatial reasoning through dedicated systems ensures that geographic calculations remain correct, deterministic and grounded in real-world data.

In practice, the most robust systems combine both elements often within a single inference call. Language models interpret intent and orchestrate workflows, while specialized geospatial engines perform the calculations that require precise spatial understanding.

This separation allows each component to focus on what it does best: language models provide natural language understanding and intent interpretation and spatial systems provide geographic correctness

As AI systems become more deeply embedded in real-world tasks—navigation, logistics, mobility services, urban planning and location-based applications—the need for reliable spatial reasoning will only grow.

Understanding where something is, how locations relate to one another and how movement occurs through physical networks requires more than textual knowledge. It requires computation over maps, road graphs and dynamic signals describing the world as it changes.

This is why enabling AI to operate in the physical world increasingly depends on combining language intelligence with spatial intelligence.

LLMs may interpret what a user wants, but determining how that request maps to the real world requires deterministic computation over geographic data not probabilistic inference from text alone. The difference matters most at scale. A single imprecise result is a minor inconvenience. Across tens of thousands of queries a day—in logistics, mobility or location-based services—the gap between approximate and correct is the gap between a system that works and one that quietly fails its users.

Aleksandra Kovacevic

Sr. Director, Head of Responsible AI

Share article

Louis Boroditsky — 27 May 2026

HERE Technologies — 20 May 2026

Aleksandra Kovacevic — 24 April 2026

Why sign up:

Latest offers and discounts

Tailored content delivered weekly

Exclusive events

One click to unsubscribe